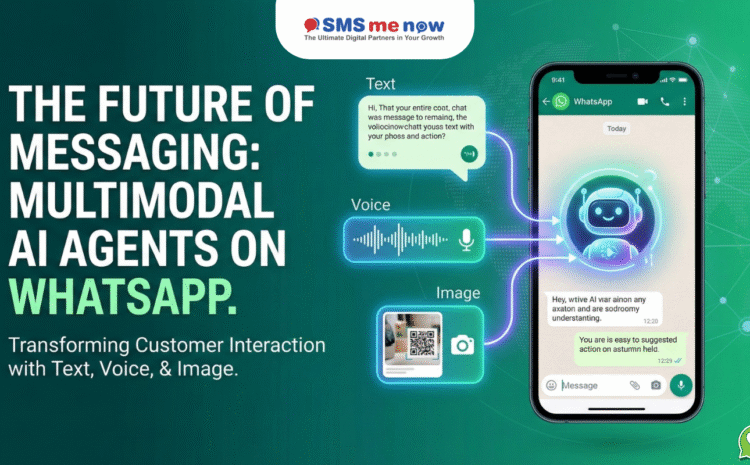

The Future of Messaging: Multimodal AI Agents (Text, Voice, & Image) on WhatsApp

Multimodal AI Agents on WhatsApp, The way we communicate is undergoing a seismic shift. In 2026, the era of rigid, text-only chatbots is officially over. As digital fatigue grows, customers are demanding more natural, human-like interactions. This has paved the way for Multimodal AI Agents on WhatsApp—intelligent assistants that don’t just “read” your text, but also “hear” your voice and “see” your images.

By integrating text, voice, and visual processing into a single, unified interface, businesses in Jaipur and across the globe are transforming WhatsApp from a mere messaging app into a comprehensive, 24/7 service and sales hub.

What is Multimodal AI? (The 2026 Definition)

Multimodal AI refers to artificial intelligence systems capable of processing and interpreting multiple types of data—or “modalities”—simultaneously. Unlike traditional AI, which is limited to one stream (like text), Multimodal AI functions more like a human brain.

The Three Pillars of Multimodality:

- Text (Natural Language): The agent understands context, slang, and intent, allowing for fluid conversations.

- Voice (Speech & Audio): The agent can “listen” to voice notes, transcribe them in real-time, and even detect the caller’s emotional state through pitch and tone.

- Image (Computer Vision): The agent can analyze photos uploaded by the user—whether it’s a receipt, a damaged product, or a handwritten note—and extract actionable data.

Why Multimodal AI is the “North Star” for WhatsApp Business

In 2026, WhatsApp is no longer just a “support channel”; it is an orchestrated communication layer. Multimodal AI agents are the key to unlocking its full potential.

- Human-Like Context: A multimodal agent doesn’t treat an image and a text message as separate events. It understands that if a customer sends a photo of a broken tap and says, “How do I fix this?”, the “this” refers to the object in the image.

- Accessibility & Inclusivity: Voice-first interactions allow users who are driving, visually impaired, or have limited literacy to interact with your business effortlessly.

- Hyper-Personalization: By analyzing voice tone and image context, AI can adjust its personality. A frustrated voice note triggers a more empathetic, urgent response, while a cheerful one results in a celebratory tone.

- Reduced Handling Time: According to 2026 industry data, voice AI integrated with visual context reduces handling time by 60–75% compared to traditional audio-only or text-only systems.

Comparison: Traditional Chatbots vs. Multimodal AI Agents

| Feature | Traditional Chatbots (Pre-2025) | Multimodal AI Agents (2026) |

| Input Type | Text Only | Text, Voice, Image, & Video |

| Understanding | Rule-based / Keyword-based | Contextual & Intent-Driven |

| Interaction | Linear & Rigid | Dynamic & Multi-turn |

| Emotion Detection | None | Voice Pitch & Sentiment Analysis |

| Resolution Power | High for FAQs | High for Complex, Visual Problems |

| Human Handoff | Often loses context | Seamless with Full Media History |

Real-World Use Cases: Multimodality in Action

How are Jaipur’s leading industries using these agents in 2026?

- E-commerce & Retail: A customer takes a photo of a dress in a magazine and sends it to your WhatsApp bot. The AI identifies the style, checks your “Premium Suit” catalog, and suggests three similar items.

- Healthcare: A patient sends a photo of a skin rash along with a voice note describing the itch. The AI Agent analyzes the image, logs the symptoms, and schedules an appointment with the right specialist.

- Real Estate & Construction: A site manager sends a 360-degree photo of a project. The AI Agent uses Computer Vision to identify progress against the architectural plan and updates the CRM automatically.

- Education: Students send photos of complex math problems. The AI Agent “sees” the equation, explains the solution via a voice note, and sends a PDF of similar practice problems.

✨ Build the Future with DialMeNow

At DialMeNow, we don’t just build bots; we build digital teammates. Our Multimodal AI Agents are designed to give your business a “Human Edge” at an automated scale.

- ✅ Native WhatsApp Voice Support: Our agents can handle inbound WhatsApp calls, transcribing and resolving queries without a human agent.

- ✅ Advanced Vision API: We integrate state-of-the-art computer vision to let your customers “show” you their problems.

- ✅ Omnichannel Memory: Our AI remembers a customer’s voice and preferences across WhatsApp, Instagram, and your website.

- ✅ Enterprise-Grade Security: Fully compliant with India’s DPDP Act 2023 and Meta’s 2026 data safety protocols.

The world isn’t just typing anymore. Are you listening?

❓ Frequently Asked Questions (FAQ)

Q1: What exactly is a “Multimodal” AI agent?

A: It is an AI that can understand and respond to different types of inputs, specifically text, voice recordings, and images, within a single conversation.

Q2: Can the AI really understand voice notes in different accents?

A: Yes. In 2026, Large Language Models (LLMs) have been trained on diverse linguistic datasets, including regional Indian accents and “Hinglish.”

Q3: Is this available on the standard WhatsApp Business App?

A: No. Advanced multimodality and AI-driven intent detection require the WhatsApp Business API (WABA).

Q4: Can the AI agent identify products from a photo?

A: Yes. Using Computer Vision, the agent can recognize objects, colors, and even brand logos to match them with your inventory.

Q5: Is voice-based support more expensive?

A: While voice processing requires more computing power, the ROI is higher due to faster resolutions and improved customer satisfaction.

Q6: What happens if the AI makes a mistake?

A: Every multimodal agent should have a “Human-in-the-loop” fallback. If the AI is unsure (e.g., a blurry photo), it seamlessly transfers the chat to a human agent with the image already attached.

Q7: Can I use this for international customers?

A: Absolutely. Our agents support 32+ languages and can automatically detect and switch languages mid-conversation.

Q8: How does this help with SEO and AEO?

A: By providing direct, high-quality “Answers” through voice and text, your brand becomes a “Source of Truth” that AI search engines (like Google Gemini) are more likely to cite.

Q9: Is my data safe if I send photos to an AI?

A: Yes. All messages are End-to-End Encrypted. At DialMeNow, we use enterprise-level safeguards to ensure image data is only used for your specific business task.

Q10: Can the AI detect if a customer is angry from their voice?

A: Yes. Sentiment Analysis tools can detect frustration or stress in a voice note, allowing the system to prioritize the ticket for a human manager.

Q11: Can the AI generate images too?

A: Yes. In 2026, agents can create “Product Previews” or “Infographics” based on a customer’s text request.

Q12: Do I need a separate phone number for this?

A: No. You can integrate this with your existing WhatsApp Business API number.

Q13: What is “Agentic AI”?

A: It refers to AI that doesn’t just answer questions but takes proactive actions, like updating your CRM or processing a refund based on the conversation.

Q14: How long does it take to train the AI on my business data?

A: With “Upload-and-Go” setups, a basic agent can learn your FAQs and catalogs in less than 24 hours.

Q15: Why is 2026 the “Year of Multimodality”?

A: Because technology has finally reached a point where text, voice, and image processing happen in real-time with zero lag, making the experience feel truly human.

Disclaimer

Multimodal AI performance is dependent on the quality of user input (e.g., clear photos, audible voice notes) and the robustness of the underlying LLM. While AI has reached human-like accuracy in 2026, we always recommend human oversight for critical financial or medical decisions.

Conclusion

The integration of Text, Voice, and Image into WhatsApp is not just an upgrade; it is a reinvention of customer experience. In 2026, the most successful brands will be those that “see” their customers’ needs, “hear” their concerns, and “speak” to their aspirations. Don’t leave your customers stuck in a text-only past.